In this past week I have spent an unhealthy amount of time looking at anonymous posts about people’s secret crushes (or inflated egos, but you can’t always tell), and that’s left some deep and permanent mental scars. On the other hand, though, I’ve also earned about a pizza’s worth of AdSense revenue, so it was entirely worth it.

Crushly.info – both a play on the tech startup trend of adding -ly to words to make company names and the cheapest domain we could find1 – is a site that allows students from Rhodes and UCT to search the entire contents of anonymous crush submission Facebook pages, usually for their own name. It also displays the top ten most common names (or crushees) throughout all posts in a colourful graph on the landing page. My good friend Kieran Hunt wrote the pretty frontend, and I handled collecting, searching and serving the data.

I came up with the idea of writing a search website for the crushes pages a little while after they started, when I noticed how uniform the posting format was – each crush had a heading hashtag2, a post body usually containing a single name, and a timestamp.

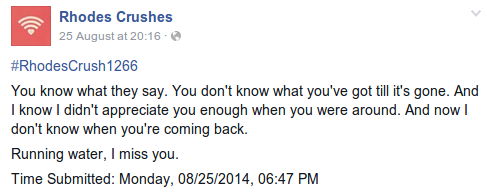

Well, not all of them have names.

So I got to thinking: how easy would it be to pull everything out of the page and store it in a neat little database? The answer: yeah, pretty easy.

Scripting in Python, I first took a urllib2/Selenium/BeautifulSoup-style approach of scraping the actual webpage to get the data out, which would have meant searching through the complex HTML and Javascript constructions of a dynamically-created Facebook page and simulating user scrolling in order to get everything – while infinitely scrolling webpages and the other asynchronous aspects of the modern web are very nice from a user perspective, they do present some challenges to a piece of code that would just like to download the webpage once and get everything.

Thankfully, I realised that there was a much easier way of getting the data I wanted before I’d spent too much time digging through HTML. Facebook has this great thing called the Graph API, which outputs Facebook data, such as the contents of a page, in an easily machine-parsable format. Before long, I had the entire page saved in a database.

The next challenge was finding names in the crush posts. The obvious approach, I decided, was to use regular expressions (pattern-matching for text) and that actually worked in quite a few cases, but certainly wasn’t a catch-all, even after the expression was expanded from “two words, each starting with a capital letter” to a behemoth of a thing that accepted hyphens, three or more names, single-quoted nicknames, “van der"s and “du"s and a whole host of other exceptions (courtesy of Craig Marais).

Kieran came up with a better approach: because commenters would more often than not tag their crushee friends in the comments of a crush post, the best way to get the names would be to check the tags. I rewrote the naming method to collect all the tags and then search for words they had in common with the post commented on, ultimately choosing the one with the largest intersection. This way, the script was able to find surnames where only a first name had been mentioned, correct misspellings, and get a (somewhat) more consistent set of names for compiling the top ten than would otherwise have been possible. The regex was kept as Plan B, for when no tags existed.

We got the database and the bulk of the frontend working in about a day. After that I lost interest in the whole thing for a week or two, mostly out of a lack of desire to implement a server to connect the two. In the mean time, I did some work on my packet-wrangling Honours thesis using Elasticsearch, a super-fast, super-cool NoSQL-database-style data search engine that uses an HTTP API and lots of JSON.

I reached about chapter five of Elasticsearch: The Definitive Guide before realising that Elasticsearch would be perfect as the Crushly backend – the killer feature being super-quick fuzzy text searching, and the other killer feature being that I knew how to use it. I soon had my Python script rewritten to send data to Elasticsearch instead of SQLlite and a simple Node.js server up to route between the client webpage and the Elasticsearch server. There were a few hiccups along the way: I had to learn about search-as-you-type in ES (initially the website would only respond to full names even though the client would send each letter as it came in) and we initially only got the top ten to display a bunch of first names and “Time Submitted”, but we managed to iron most of that out fairly quickly.

Like any pragmatic website owners, we slapped some Google Analytics and AdSense on the site before making it public and reaped the creepy statistics and small amounts of money. According to the demographics graphs, most of the visitors to Crushly.info are “movie-lovers”, “shutterbugs” and “technophiles”. The age-range is squarely 18–24 (not surpising) and the gender ratio is 58% male, 42% female (somewhat surprising).

The university crushes pages are all run by the same group at SACrushes.com, so it was fairly trivial to direct the collection script at the UCT Crushes page and add that section to the site. Adding further universities would be easier still, and remains a potential extension for the future, but many of the pages are very inactive. It’s also probably not unfair to say that people have less of a chance of being tagged by their friends or recognised by often vague descriptions at larger institutions: only 9% of posts from Rhodes Crushes remain nameless in my database, compared to 18% at UCT.3

The crush page thing as a whole, of course, is a fad that’s already dying down, so we probably won’t be spending an inordinate amount of time doing further work on the website. Still, it was fun and interesting and it feels good to produce something that other people can use and enjoy. If you have a cool idea, you should just get stuck in and do it, and even if it’s not perfect and you make a lot of mistakes, you’ll learn something.

I have some plans for projects in a similar vein, and I hope to write about those soon. Until then, keep searching your name in the vain hope that one of your friends likes you enough to write a fake post about how attractive you are without telling you just to make you feel better about yourself.

David Yates.

David Yates.